Knowing how to prepare your test script before conducting an unmoderated usability test is essential for a successful study.

A framework helps you to structure all the information you need to include in your usability test.

Frameworks usually include a set of concepts, practices, and criteria that facilitate dealing with a common process or problem and serve as a point of reference for future projects.

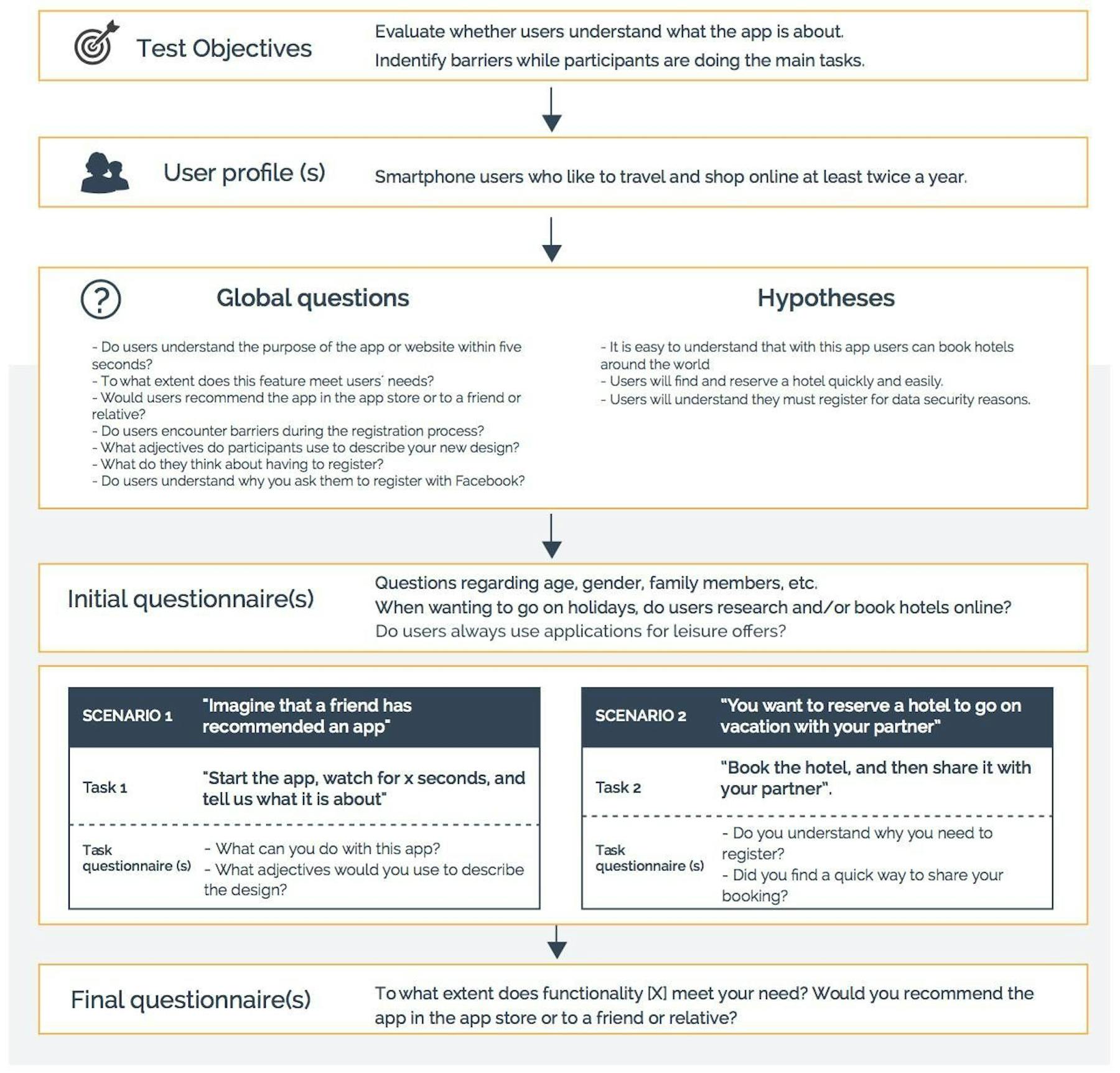

In this post we will present a framework which helps you define your remote usability test; everything from creating the initial concept all the way to conducting your research. By the time you have your finished script you will be able to quickly set up and run your test in a testing tool.

Why Should You Prepare a Framework?

Knowing how to prepare your test script before conducting an unmoderated remote task-based usability test is essential. A framework helps you to structure all the information you need to include in your usability test. Frameworks usually include a set of concepts, practices, and criteria that facilitate dealing with a common process or problem and serve as a point of reference for future projects.

What Are The Elements Of A Remote Usability Test?

1. Hypotheses and global questions: According to the objective of your test, you will first develop hypotheses and questions to ask participants.

2. Scenarios: To help users understand what you want them to test, you will need to provide them with context e.g. “Winter is approaching fast and you want to buy new boots.”

3. Task(s) for each scenario: Asking users to perform a task in-line with your scenario allows you to validate your hypothesis and/or provides you with further context.

4. Questionnaires and their logic, i.e. where users are sent depending on their answers. During a test, there are three moments where asking questions comes in handy:

- At the beginning – Initial questionnaire: Used to categorize users and establish segmentation variables. In general, psycho-demographic data is collected e.g. gender, age, profession etc.

- After each task – End task questionnaire: Just after finishing a task, and before starting another one, you can use these questionnaires to collect more detailed feedback regarding the experience users had while performing a task.

- At the end – Final questionnaire: Gather information on the user experience and user satisfaction in general. You can also combine initial and final questionnaires e.g. by asking participants to rate a brand before and after the test in order to analyze how the user experience impacts brand perception.

5. Invitations and participants: All information available related to each participant. In particular, the user ID your testing tool assigns to each person, the invitation message and design and status of each participant e.g. cheaters, abandoned study, successfully completed, paused etc.

6. Data, results, and analysis: Includes all study results. The UX metrics you obtain depends on the testing tool you use. You should at least gather: qualitative data (user feedback via video & audio, browser videos including screen interaction) and quantitative data (success rates for each task, number of clicks needed to perform a task, time on task, clickstreams, satisfaction rates, difficulty ratings and other issues reported by users).

8 steps for creating your remote usability testing script

1. Define objectives

What global questions do you want users to answer? What hypotheses do you want to validate?

Defining only one or two test objectives will help you focus on the results you want to obtain. Your objectives should aim at answering one important strategic question. Your hypothesis and global questions will allow you to derive scenarios, tasks and questionnaires you will need in order to gather the feedback you want from your participants.

Questions that can help you define your objectives are: What first impression do users have? Do they understand your product and services? Does your business cover user / customer needs? Is your website easy to use? How do users evaluate your new design? How do users rate you compared to your competition?

Answers to these questions may turn into your test objectives:

- Evaluate whether users understand what the app or website is about.

- Locate purchasing our browsing barriers while participants are performing a task.

- Determine if the user experience is satisfactory.

2. Choose the right participants

If users perform a test in real-world context you will be able to see how users behave under real-world conditions, such as how they behave while browsing on their smartphone. Therefore, it is important that your testing tool allows you to conduct tests on different device types. The closest you can get to realistic usage is by inviting real users to conduct a study either from your database or by means of a panel provider. If your participants are also potential customers they will know how to best describe their needs and problems.

By working with real user context you also obtain more actionable data and get valuable information on the time of use. This is not possible if you conduct a test in a usability lab since the context can sometimes distort the data.

The total number of participants depends on your website or app and the type of information you want to obtain. If the approach is purely qualitative a small sample might suffice but if you are after quantitative data, your goal will be to find out how many of your users are affected by a certain UX issue.

3. Select the questions and formulate hypotheses

After having defined the objective, it is time to focus on potential questions. They can be classified according to whether they are general (to collect psycho- demographic information such as: gender, age, professional profile, family members, habits, etc.) or whether they require a context. For example, you will want to include questions users can only answer by interacting with your application and by performing a task. Tasks enable users to experience what you are asking about.

For example, imagine you want to test the purchasing process of your site. The winter collection has arrived and you’ve made changes to the check-out procedure, especially when users shop for winter shoes. You now want to invite users to test the new check-out. With this context, possible questions could be:

- To what extent does functionality X help users complete their purchase?

- Are there barriers during the registration process?

- What do users think about having to register?

- Do users understand why you request them to register with Facebook?

4. Create realistic scenarios

After having formulated research questions, you need to think about how to put them into context. This is why you need scenarios. Participants need to feel like they’re in a real context of use. Through scenarios users are able to understand why you ask them a certain question.

These are some example scenarios for our fashion website:

- A friend has recommended this web site to you.

- You want to go on a trip to the mountains and need new hiking shoes.

- You want to buy a Christmas present for your father for his upcoming skiing holiday.

5. Prepare the tasks

In order for users to answer your research questions they must interact with your app, mobile site, website or prototype first. The way you ask users to perform a task is important because it will influence how users behave while doing the test. You should link scenarios and use a consistent “story” to create the right testing context.

Make sure you establish a way for validating task success e.g. by requesting users to find a price, a certain page or specific information:

- “Buy blue winter boots by brand X in your size, put them in your shopping cart and proceed with the check-out until you reach the page with the payment options. Write down the price of the boots.”

- “You friend has recommended this web site to you. Access the homepage and take a look around for a minute and tell us what fashion is on sale at the moment.”

6. Define questionnaires

Now that you have created the context (scenarios and tasks), it is time to define the questionnaires in order to collect not only results, but to answer your hypotheses and strategic questions. Usually these questions are about opinions and perceptions and help gather in-depth information on the user experience.

During a study, three different stages for asking questions usually exist:

- At the beginning of the study: Perfect for collecting psycho-demographic data and information on brand perception or competitive benchmark data, before users experience your site or app.

- At the end of a task: In order to understand the whys behind user behavior.

- At the end of the study: A very common question is the Net Promoter Score (NPS) “Would you recommend this web shop to friends or family” to evaluate user satisfaction rates.

7. Double-check your test script content

In the following graphic we provide an overview of all framework parts to help you when reviewing your final script:

8. Configure your testing tool

When you are done planning your study along this framework, it is time to enter everything in your UX research software. At this point it is important to highlight that the more your tool meets the presented framework the easier it will be to set your project up.

In other words, your remote testing tool should let you enter different types of questionnaires, scenarios, and tasks and should allow you to define when certain information or questions are shown to participants. If your testing tool is set up the same way as this framework, you won’t need to spend extra time and effort on adapting your script to your research tool. This will accelerate your research process.

In summary:

- Define your objectives for the study: What global questions do you want users to answer? What hypotheses do you want to validate?

- Choose your participants: Do you want to invite real users? What key demographic are you after? How many total participants to get the information you’re after?

- Select your questions: What general psycho-demographic information are you after? What questions are needed to test your hypothesis?

- Create a realistic scenario: What are common user scenarios that occur on your site or app that you want to test?

- Prepare your tasks: What task(s) do you need to test in order to validate your hypothesis? How are you going to validate users being successful at the task?

- Decide where you want to place user questionnaires: At the beginning of your study, after each task, at the end of the study?

- Double check your framework and configure the script into your testing tool.